Discovery Failed to Request Cluster Info

Editor – This seven‑function series of articles is now complete:

Y'all can also download the complete set of articles, plus information most implementing microservices using NGINX Plus, as an ebook – Microservices: From Design to Deployment. Besides, please look at the new Microservices Solutions page.

This is the 4th article in our series about building applications with microservices. The first article introduces the Microservices Architecture pattern and discussed the benefits and drawbacks of using microservices. The second and third articles in the series describe unlike aspects of advice within a microservices architecture . In this article, we explore the closely related problem of service discovery.

Why Use Service Discovery?

Let'south imagine that you are writing some code that invokes a service that has a REST API or Thrift API. In order to make a asking, your code needs to know the network location (IP address and port) of a service instance. In a traditional application running on physical hardware, the network locations of service instances are relatively static. For example, your code tin can read the network locations from a configuration file that is occasionally updated.

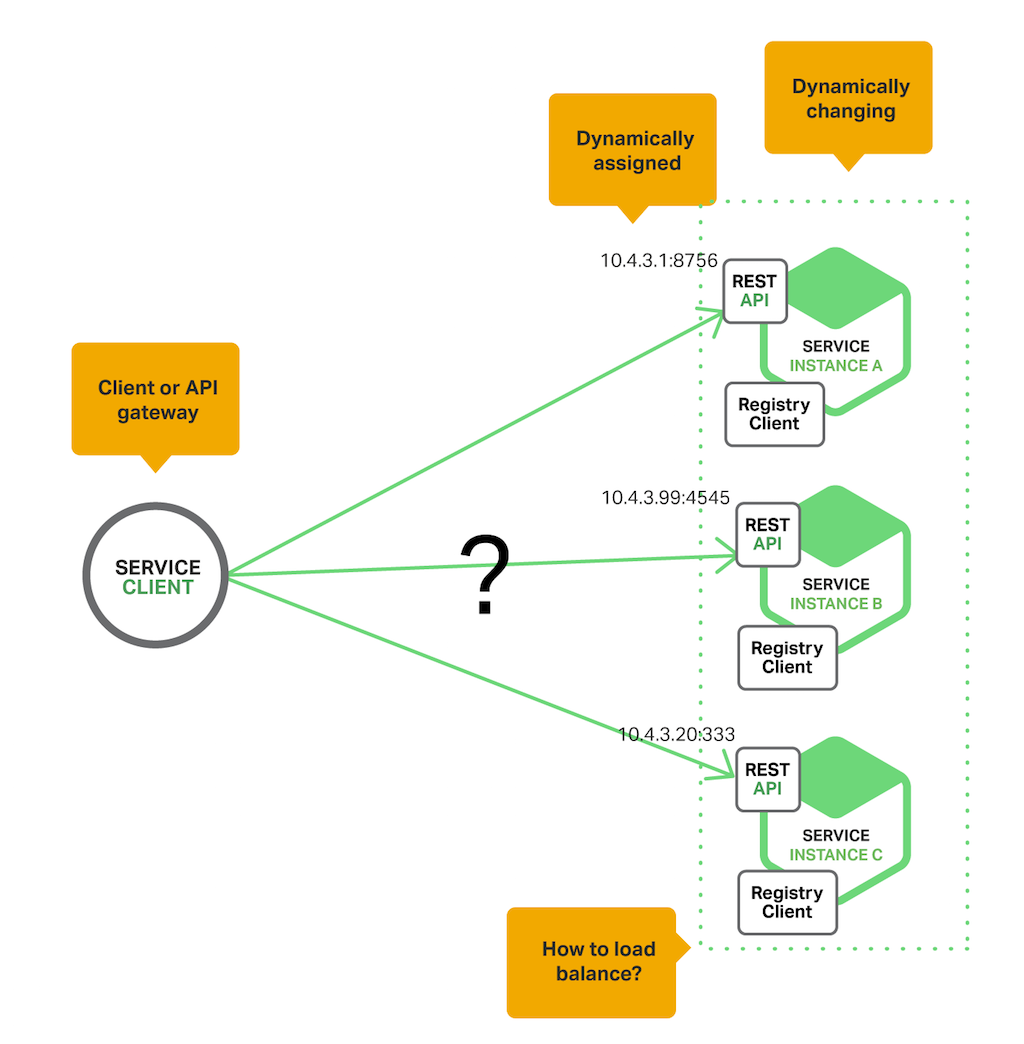

In a modern, cloud‑based microservices application, however, this is a much more difficult problem to solve as shown in the following diagram.

Service instances have dynamically assigned network locations. Moreover, the set of service instances changes dynamically because of autoscaling, failures, and upgrades. Consequently, your customer code needs to use a more elaborate service discovery machinery.

There are two main service discovery patterns: client‑side discovery and server‑side discovery. Let'south kickoff look at customer‑side discovery.

The Client‑Side Discovery Pattern

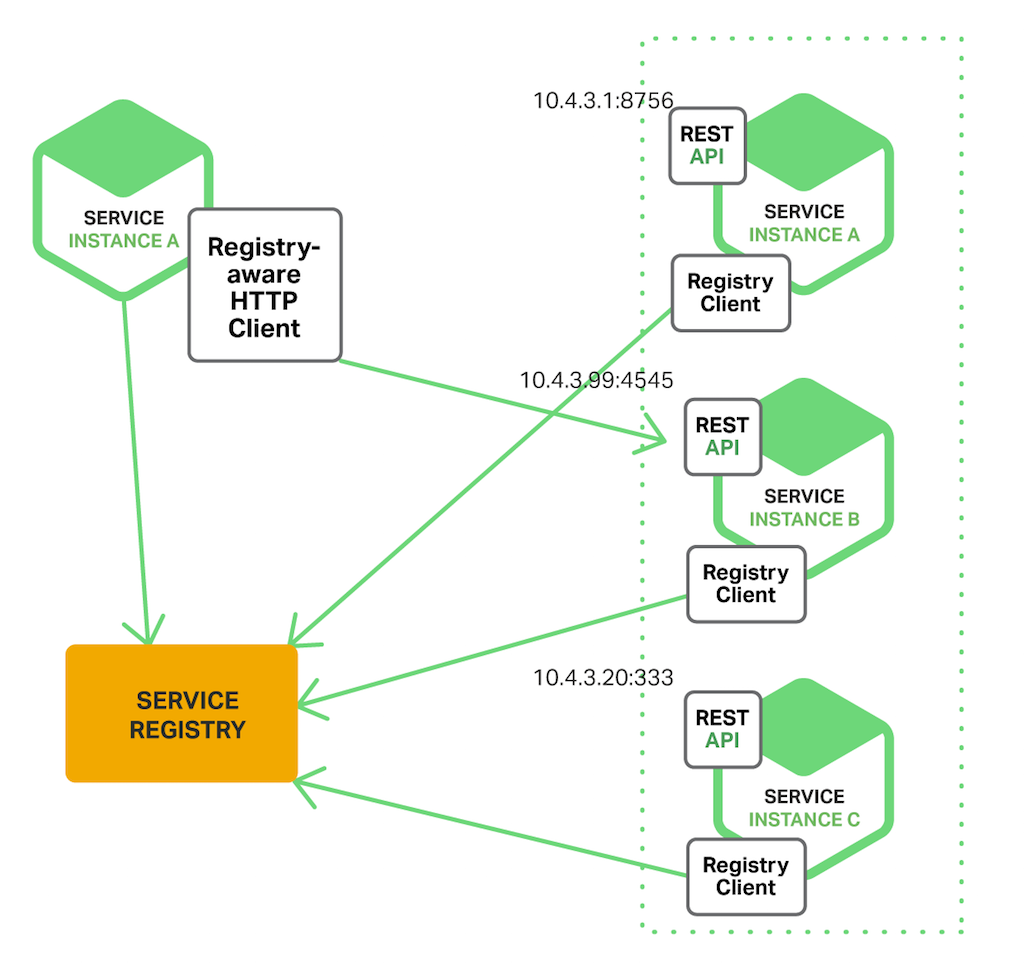

When using customer‑side discovery, the customer is responsible for determining the network locations of available service instances and load balancing requests beyond them. The client queries a service registry, which is a database of bachelor service instances. The client then uses a load‑balancing algorithm to select one of the available service instances and makes a request.

The following diagram shows the structure of this design.

The network location of a service instance is registered with the service registry when it starts upward. It is removed from the service registry when the instance terminates. The service instance'due south registration is typically refreshed periodically using a heartbeat mechanism.

Netflix OSS provides a swell example of the client‑side discovery pattern. Netflix Eureka is a service registry. It provides a REST API for managing service‑example registration and for querying available instances. Netflix Ribbon is an IPC client that works with Eureka to load balance requests across the available service instances. We will hash out Eureka in more than depth later in this article.

The client‑side discovery design has a variety of benefits and drawbacks. This pattern is relatively straightforward and, except for the service registry, there are no other moving parts. Also, since the customer knows about the bachelor services instances, information technology can make intelligent, application‑specific load‑balancing decisions such as using hashing consistently. 1 significant drawback of this pattern is that it couples the client with the service registry. You must implement client‑side service discovery logic for each programming language and framework used by your service clients.

At present that we accept looked at client‑side discovery, let's take a look at server‑side discovery.

The Server‑Side Discovery Blueprint

The other approach to service discovery is the server-side discovery blueprint. The following diagram shows the structure of this pattern.

The customer makes a request to a service via a load balancer. The load balancer queries the service registry and routes each request to an available service case. As with customer‑side discovery, service instances are registered and deregistered with the service registry.

The AWS Elastic Load Balancer (ELB) is an example of a server-side discovery router. An ELB is ordinarily used to load balance external traffic from the Internet. All the same, you can likewise employ an ELB to load balance traffic that is internal to a virtual private cloud (VPC). A client makes requests (HTTP or TCP) via the ELB using its DNS name. The ELB load balances the traffic among a set of registered Elastic Compute Cloud (EC2) instances or EC2 Container Service (ECS) containers. At that place isn't a split up service registry. Instead, EC2 instances and ECS containers are registered with the ELB itself.

HTTP servers and load balancers such equally NGINX Plus and NGINX tin also be used as a server-side discovery load balancer. For instance, this blog postal service describes using Delegate Template to dynamically reconfigure NGINX contrary proxying. Consul Template is a tool that periodically regenerates arbitrary configuration files from configuration data stored in the Delegate service registry. It runs an arbitrary shell command whenever the files change. In the case described by the weblog post, Consul Template generates an nginx.conf file, which configures the reverse proxying, and then runs a command that tells NGINX to reload the configuration. A more sophisticated implementation could dynamically reconfigure NGINX Plus using either its HTTP API or DNS.

Some deployment environments such as Kubernetes and Marathon run a proxy on each host in the cluster. The proxy plays the role of a server‑side discovery load balancer. In order to brand a request to a service, a customer routes the request via the proxy using the host's IP address and the service'southward assigned port. The proxy then transparently forwards the asking to an available service case running somewhere in the cluster.

The server‑side discovery blueprint has several benefits and drawbacks. 1 swell benefit of this pattern is that details of discovery are abstracted away from the client. Clients simply brand requests to the load balancer. This eliminates the need to implement discovery logic for each programming language and framework used by your service clients. Besides, as mentioned higher up, some deployment environments provide this functionality for gratuitous. This pattern also has some drawbacks, however. Unless the load balancer is provided by the deployment surround, information technology is yet another highly available organisation component that you need to set upwardly and manage.

The Service Registry

The service registry is a cardinal part of service discovery. Information technology is a database containing the network locations of service instances. A service registry needs to be highly available and upward to date. Clients can cache network locations obtained from the service registry. However, that information eventually becomes out of date and clients become unable to discover service instances. Consequently, a service registry consists of a cluster of servers that use a replication protocol to maintain consistency.

As mentioned earlier, Netflix Eureka is good example of a service registry. It provides a Rest API for registering and querying service instances. A service example registers its network location using a POST request. Every 30 seconds it must refresh its registration using a PUT request. A registration is removed past either using an HTTP DELETE request or by the instance registration timing out. As you might expect, a client can retrieve the registered service instances past using an HTTP GET request.

Netflix achieves high availability by running one or more than Eureka servers in each Amazon EC2 availability zone. Each Eureka server runs on an EC2 case that has an Rubberband IP address. DNS TEXT records are used to store the Eureka cluster configuration, which is a map from availability zones to a list of the network locations of Eureka servers. When a Eureka server starts upwardly, it queries DNS to retrieve the Eureka cluster configuration, locates its peers, and assigns itself an unused Elastic IP address.

Eureka clients – services and service clients – query DNS to find the network locations of Eureka servers. Clients prefer to use a Eureka server in the aforementioned availability zone. However, if none is available, the client uses a Eureka server in another availability zone.

Other examples of service registries include:

- etcd – A highly available, distributed, consistent, key‑value store that is used for shared configuration and service discovery. Two notable projects that use etcd are Kubernetes and Cloud Foundry.

- consul – A tool for discovering and configuring services. It provides an API that allows clients to register and discover services. Consul can perform health checks to determine service availability.

- Apache Zookeeper – A widely used, high‑performance coordination service for distributed applications. Apache Zookeeper was originally a subproject of Hadoop but is now a top‑level project.

Also, every bit noted previously, some systems such every bit Kubernetes, Marathon, and AWS exercise not take an explicit service registry. Instead, the service registry is just a built‑in function of the infrastructure.

Now that we have looked at the concept of a service registry, let'south look at how service instances are registered with the service registry.

Service Registration Options

As previously mentioned, service instances must exist registered with and deregistered from the service registry. At that place are a couple of different ways to handle the registration and deregistration. One choice is for service instances to annals themselves, the self‑registration pattern. The other option is for another organization component to manage the registration of service instances, the 3rd‑political party registration pattern. Allow'due south outset look at the self‑registration pattern.

The Self‑Registration Design

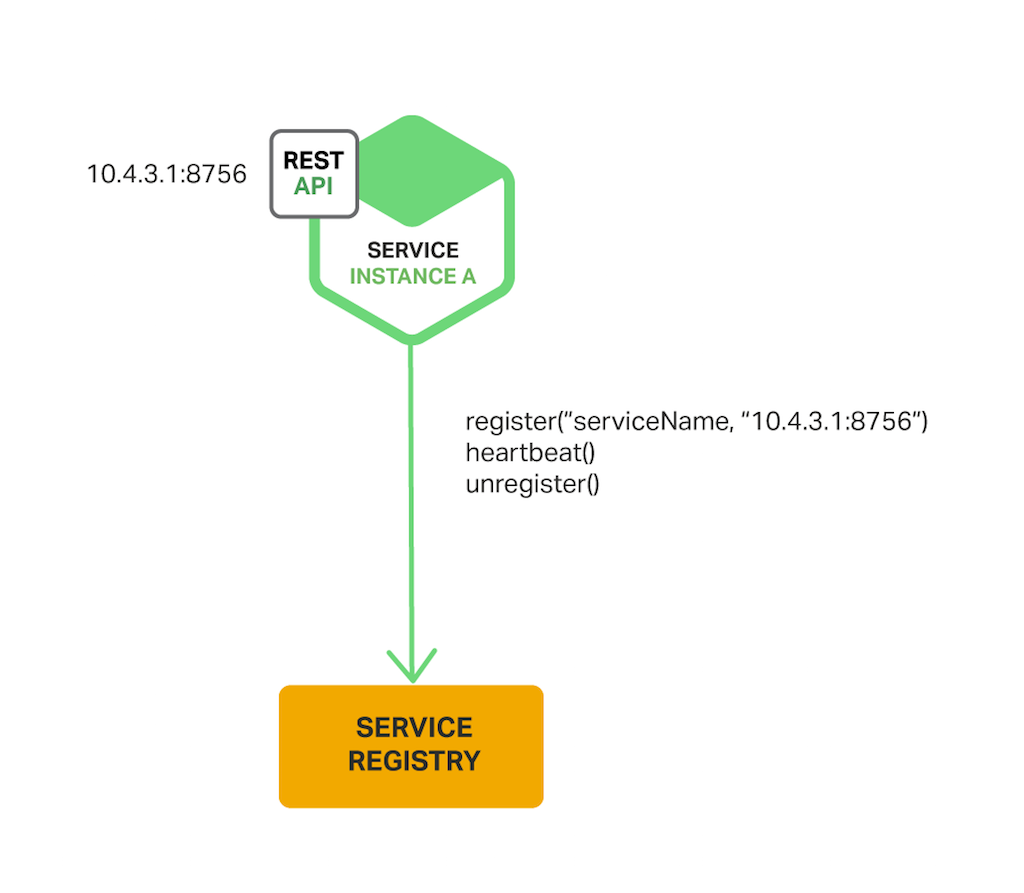

When using the self‑registration pattern, a service example is responsible for registering and deregistering itself with the service registry. Also, if required, a service instance sends heartbeat requests to prevent its registration from expiring. The following diagram shows the construction of this blueprint.

A good example of this approach is the Netflix OSS Eureka client. The Eureka client handles all aspects of service instance registration and deregistration. The Leap Cloud project, which implements various patterns including service discovery, makes it easy to automatically register a service instance with Eureka. You simply annotate your Java Configuration class with an @EnableEurekaClient annotation.

The self‑registration pattern has various benefits and drawbacks. One do good is that it is relatively simple and doesn't require any other system components. Even so, a major drawback is that information technology couples the service instances to the service registry. Yous must implement the registration code in each programming linguistic communication and framework used by your services.

The alternative approach, which decouples services from the service registry, is the third‑party registration pattern.

The Third‑Party Registration Pattern

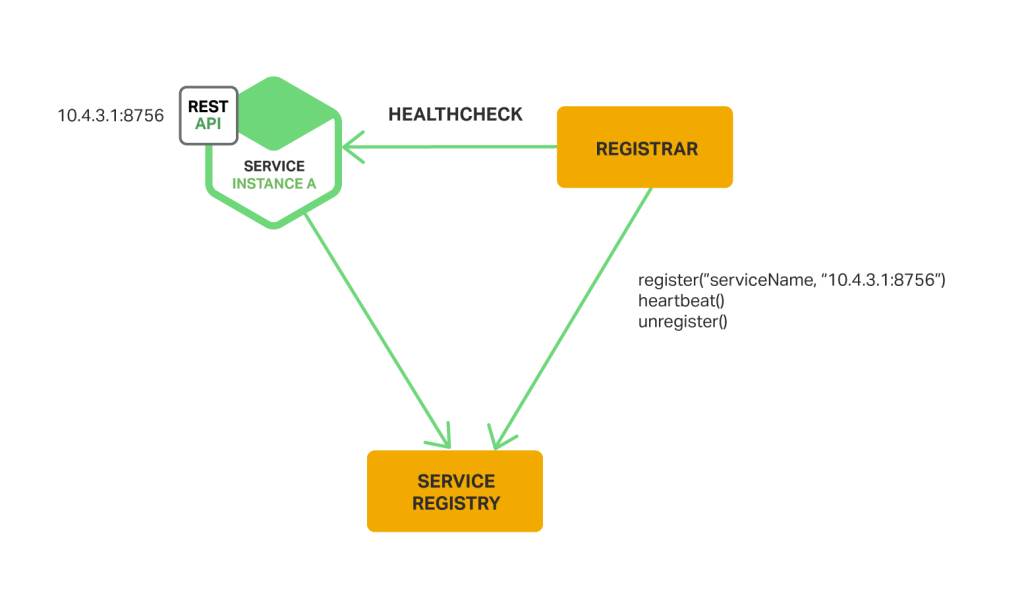

When using the third-political party registration pattern, service instances aren't responsible for registering themselves with the service registry. Instead, another system component known equally the service registrar handles the registration. The service registrar tracks changes to the set of running instances by either polling the deployment surroundings or subscribing to events. When it notices a newly bachelor service instance information technology registers the example with the service registry. The service registrar too deregisters terminated service instances. The following diagram shows the structure of this pattern.

Ane example of a service registrar is the open source Registrator project. Information technology automatically registers and deregisters service instances that are deployed as Docker containers. Registrator supports several service registries, including etcd and Consul.

Another example of a service registrar is NetflixOSS Prana. Primarily intended for services written in non‑JVM languages, it is a sidecar application that runs next with a service instance. Prana registers and deregisters the service instance with Netflix Eureka.

The service registrar is a built‑in component of deployment environments. The EC2 instances created by an Autoscaling Group can be automatically registered with an ELB. Kubernetes services are automatically registered and fabricated available for discovery.

The tertiary‑political party registration pattern has various benefits and drawbacks. A major benefit is that services are decoupled from the service registry. You don't need to implement service‑registration logic for each programming language and framework used by your developers. Instead, service example registration is handled in a centralized manner within a defended service.

I drawback of this blueprint is that unless it'southward built into the deployment environment, information technology is yet another highly available organisation component that you need to gear up upwards and manage.

Summary

In a microservices application, the set of running service instances changes dynamically. Instances have dynamically assigned network locations. Consequently, in gild for a customer to make a request to a service it must apply a service‑discovery machinery.

A cardinal part of service discovery is the service registry. The service registry is a database of available service instances. The service registry provides a management API and a query API. Service instances are registered with and deregistered from the service registry using the management API. The query API is used by system components to notice bachelor service instances.

There are two main service‑discovery patterns: client-side discovery and service-side discovery. In systems that use customer‑side service discovery, clients query the service registry, select an available instance, and make a asking. In systems that use server‑side discovery, clients make requests via a router, which queries the service registry and forwards the asking to an available instance.

There are two primary ways that service instances are registered with and deregistered from the service registry. I option is for service instances to register themselves with the service registry, the self‑registration pattern. The other option is for some other system component to handle the registration and deregistration on behalf of the service, the third‑political party registration pattern.

In some deployment environments y'all need to fix your own service‑discovery infrastructure using a service registry such as Netflix Eureka, etcd, or Apache Zookeeper. In other deployment environments, service discovery is congenital in. For example, Kubernetes and Marathon handle service instance registration and deregistration. They also run a proxy on each cluster host that plays the part of server‑side discovery router.

An HTTP contrary proxy and load balancer such as NGINX tin too exist used as a server‑side discovery load balancer. The service registry can push the routing information to NGINX and invoke a graceful configuration update; for example, you can use Delegate Template. NGINX Plus supports additional dynamic reconfiguration mechanisms – it can pull information well-nigh service instances from the registry using DNS, and it provides an API for remote reconfiguration.

In future web log posts, nosotros'll continue to dive into other aspects of microservices. Sign up to the NGINX mailing list (form is beneath) to be notified of the release of future articles in the series.

Editor – This vii‑part series of articles is now complete:

You tin also download the complete set of articles, plus information about implementing microservices using NGINX Plus, as an ebook – Microservices: From Design to Deployment.

Guest blogger Chris Richardson is the founder of the original CloudFoundry.com, an early Java PaaS (Platform as a Service) for Amazon EC2. He at present consults with organizations to improve how they develop and deploy applications. He besides blogs regularly well-nigh microservices at https://microservices.io.

Source: https://www.nginx.com/blog/service-discovery-in-a-microservices-architecture/

0 Response to "Discovery Failed to Request Cluster Info"

Post a Comment